Chapter 1

Hospital Introduction

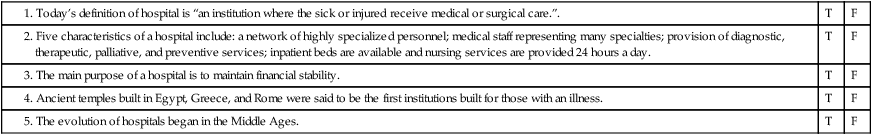

1. Define terms, phrases, abbreviations, and acronyms.

2. Provide an overview of significant factors that influenced the evolution of hospitals.

3. Outline key factors that led to the establishment of hospitals in the United States and contributed to the development of our modern-day health care delivery systems.

4. Discuss how hospital organizational structures are designed to contribute to accomplishing the hospital’s goals and mission.

5. List and describe four categories of functions in a hospital.

6. Describe functions performed by various departments.

7. Demonstrate an understanding of different types of hospitals.

8. Identify and discuss three service levels where patient care services are rendered in a hospital.

American College of Surgeons (ACS)

American Hospital Association (AHA)

American Medical Association (AMA)

Electronic health record (EHR)

Evaluation and Management (E/M)

Health Information Management (HIM)

Hospital Standardization Program

Joint Commission on Accreditation of Healthcare Organizations (JCAHO)

Medicare Severity-Diagnosis Related Groups (MS-DRG)

Prospective Payment System (PPS)

Quality Improvement Organization (QIO)

Hospital Introduction

1. A network of highly specialized personnel organized into departments designed to carry out the clinical, administrative, and operational tasks required to provide effective and efficient patient care services

2. A medical staff representing many specialties, organized to provide health care services from an interdisciplinary approach

3. Provide diagnostic, therapeutic, palliative, and preventive services

4. Inpatient beds for patients who require care for periods greater than 24 hours

Evolution of Hospitals

Ancient Medicine and Healing Centers

The practice of medicine dates back to ancient times. There is evidence of primitive procedures, such as boring a hole into the skull (trephining), and the use of medicinal herbs and fungi dating back to 10,000 b.c. or earlier (Figure 1-1). These procedures and natural remedies appeared to be effective, and research has shown that the treatment of disease and wounds was very “modern” in approach. Despite the “modern” approach, medical care during this period was dominated by religious beliefs, and healing was intertwined with spiritual and ritualistic ceremonies.

Classic Greece and Rome

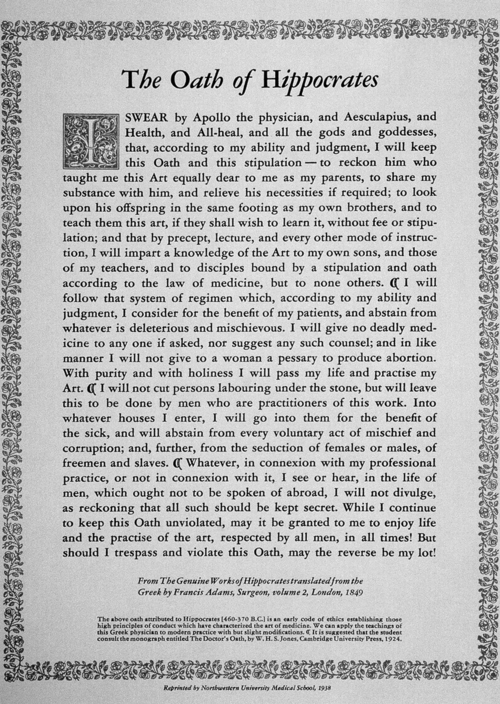

Hippocrates also laid the foundations for the development of the Hippocratic Oath (Figure 1-2). The Hippocratic Oath outlines standards for medical and ethical behavior that physicians follow today. Hippocrates traveled throughout Greece practicing his medicine. He founded a medical school in Greece and began teaching his ideas. Hippocrates is known as the “Father of Medicine” because his philosophies and methods formed the foundations of medicine and treatment we recognize today.

Roman hospitals were primarily found in military settlements, commonly referred to as field hospitals. Medical care provided in field hospitals showed evidence of the first systemized approach to medical care emphasizing diet, sanitation, and hygiene. Advances in medical treatments and instruments were made using knowledge gained from treating injured soldiers through many wars. Instruments found in a Roman legion’s field kit included suture kits, scalpels, wound spreaders, and splints (Figure 1-3). The drugs known to clean and promote healing were similar to those used today.

History of Hospitals in the United States

Scientific Advances

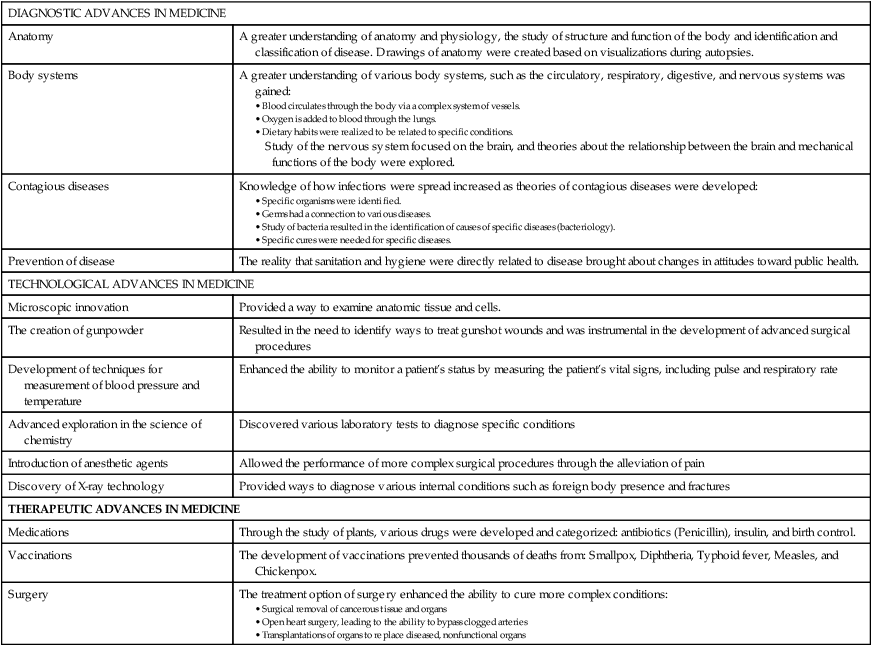

The focus of medicine shifted to research and scientific exploration of the cause of disease. Discoveries in technology led to the invention of new diagnostic tools used in the identification and treatment of disease (Table 1-1). Diagnostic, technological, and therapeutic advances made in medicine through the 20th century contributed to the growth of hospitals.

TABLE 1-1

| DIAGNOSTIC ADVANCES IN MEDICINE | |

| Anatomy | A greater understanding of anatomy and physiology, the study of structure and function of the body and identification and classification of disease. Drawings of anatomy were created based on visualizations during autopsies. |

| Body systems | A greater understanding of various body systems, such as the circulatory, respiratory, digestive, and nervous systems was gained: |

| Contagious diseases | Knowledge of how infections were spread increased as theories of contagious diseases were developed: |

| Prevention of disease | The reality that sanitation and hygiene were directly related to disease brought about changes in attitudes toward public health. |

| TECHNOLOGICAL ADVANCES IN MEDICINE | |

| Microscopic innovation | Provided a way to examine anatomic tissue and cells. |

| The creation of gunpowder | Resulted in the need to identify ways to treat gunshot wounds and was instrumental in the development of advanced surgical procedures |

| Development of techniques for measurement of blood pressure and temperature | Enhanced the ability to monitor a patient’s status by measuring the patient’s vital signs, including pulse and respiratory rate |

| Advanced exploration in the science of chemistry | Discovered various laboratory tests to diagnose specific conditions |

| Introduction of anesthetic agents | Allowed the performance of more complex surgical procedures through the alleviation of pain |

| Discovery of X-ray technology | Provided ways to diagnose various internal conditions such as foreign body presence and fractures |

| THERAPEUTIC ADVANCES IN MEDICINE | |

| Medications | Through the study of plants, various drugs were developed and categorized: antibiotics (Penicillin), insulin, and birth control. |

| Vaccinations | The development of vaccinations prevented thousands of deaths from: Smallpox, Diphtheria, Typhoid fever, Measles, and Chickenpox. |

| Surgery | The treatment option of surgery enhanced the ability to cure more complex conditions: |

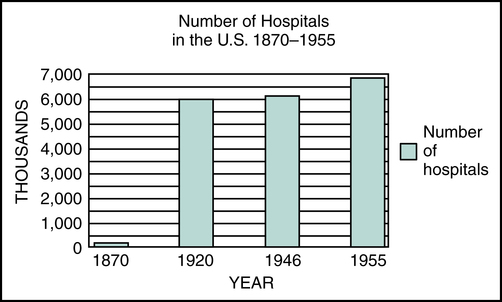

Economic Influences on Hospital Development

Advanced medical treatments and standards of care contributed to an increase in the number of patients seen in hospitals. This created a demand for more personnel and equipment, which in turn caused the cost of care provided in the hospital to rise. As the cost of hospital care increased, individuals and hospitals began to experience financial difficulties. Figure 1-4 shows the increase in the number of hospitals from 1870 to 1955.