1CHAPTER 1

Measuring Outcomes in Advanced Practice Nursing: Practice-Specific Quality Metrics

April N. Kapu, Corinna Sicoutris, Britney S. Broyhill, Rhonda D’Agostino, and Ruth M. Kleinpell

Chapter Objectives

1. Discuss the use of practice-specific quality metrics to identify outcomes of advanced practice registered nurse (APRN) care

2. Identify strategies for developing practice-specific quality metrics

3. Highlight examples of using practice-specific quality metrics to demonstrate the effect of APRN care

Chapter Discussion Questions

1. What are two practice-specific quality metrics that can be used to identify outcomes of APRN practice?

2. How can the use of practice-specific quality metrics be used to identify the impact of APRN care?

3. What strategies can be used to measure APRN impact using role-specific metrics? List several examples.

![]()

Demonstrating the impact of the APRN role is an essential component of professional practice. The Institute of Medicine (IOM) report on the future of nursing highlighted the 2importance of promoting the ability of APRNs to practice to the full extent of their education and training and to identify nurses’ contributions to delivering high-quality care (IOM, 2010). Demonstrating APRN impact requires an assessment of the structures, processes, and outcomes associated with APRN performance and the care delivery systems in which they practice (Kleinpell & Alexandrov, 2014). An increased focus on assessing the outcomes of APRN practice has resulted from the growing emphasis on outcomes that have become a recognized component of the majority of health care initiatives. The demands for measuring outcomes of care have been emphasized by federal and state regulatory agencies, practice guidelines, employers, and consumer groups. Health care organizations are now actively monitoring outcomes as a means of evaluation as well as requirements for accreditation and certification.

At the same time, a growing number of organizations are utilizing APRNs in a variety of specialty practice roles. Differentiating the impact and value of these roles has become a priority, especially as programs such as the Centers for Medicare & Medicaid Services (CMS) value-based purchasing and Medicare Access and CHIP (Children’s Health Insurance Program) Reauthorization Act of 2015 (MACRA) initiatives are being implemented. These programs are aimed at providing quality care while improving value and linking performance to hospital reimbursement structures (CMS, 2016). As outcome evaluation and ongoing performance assessment remain essential components of professional practice, the use of practice-specific quality metrics can be used to demonstrate APRN impact. This chapter discusses strategies for identifying and developing quality metrics to demonstrate impact and value of the APRN role. Institutional exemplars are provided to showcase processes and methods being used to identify APRN outcomes.

CAPTURING APRN IMPACT

CAPTURING APRN IMPACT

A number of single institutional studies and synthesis reviews of APRN outcomes have highlighted the impact of APRN roles (Albers-Heitner et al., 2012; Alexander-Banys, 2014; Hatem, Sandall, Devane, Soltani, & Gates, 2008; Hogan, Seifert, Moore, & Simonson, 2010; Jessee & Rutledge, 2012; Kapu, Kleinpell, & Pilon, 2014; Laurant et al., 2005; Newhouse et al., 2011; Sawatzky et al., 2013). While traditional outcome metrics such as length of stay (LOS) or readmission rates can be used, teasing out the individual impact of the APRN role can be difficult, as often care is provided in team-based and collaborative models of care. Other chapters in this book outline in detail specific studies that have demonstrated APRN outcomes related to the four APRN roles: clinical nurse specialist, certified registered nurse anesthetist, certified nurse-midwife, and nurse practitioner (NP). Collectively these chapters provide a comprehensive review of APRN-role-specific outcomes. Exhibit 1.1 provides several examples of general outcome metrics used to highlight APRN role impact.

When developing processes to measure APRN impact, a number of specific issues need to be considered including the type and number of APRN roles (e.g., do hospital units have 24/7 APRN coverage?), institution-specific practices (type of electronic health record and ability to sort APRN patient panels), and high-value organizational quality goals that can be targeted (e.g., has a clinic had an increase in wait time, or has a hospital unit had a recent increase in sentinel events?). Garnering administrative support for building system support for data abstraction and ongoing reporting of APRN outcome data is essential. Exhibit 1.2 outlines several considerations for identifying APRN metrics.

3EXHIBIT 1.1 Examples of Outcome Metrics for APRNs

Blood glucose control |

Symptom management |

Patient lengths of stay |

Costs of care |

Smoking cessation |

Adverse events (e.g., accidental extubation) |

Patient and family knowledge |

Patient self-efficacy |

Urinary incontinence rates |

Lipid management |

Blood pressure control |

Fall rates |

Staff nurse knowledge |

Staff nurse retention rates |

Nosocomial infection rates |

Readmission rates |

Nutritional intake |

Skin breakdown rates |

Restraint use |

Hand hygiene compliance |

Patient and family satisfaction rates |

Nurse satisfaction rates |

Caregiver knowledge, satisfaction |

Rates of adherence to best practices |

APRNs, advanced practice registered nurses.

Strategies that can be used to measure APRN impact include establishing role-specific metrics, planning for outcome evaluation when any new role is established, and building in outcome assessment as a part of the Ongoing Professional Practice Evaluation (OPPE) processes of an institution. Nationally, a number of organizations are assimilating increasing numbers of APRNs. Focusing on capturing the impact of APRNs becomes an essential component of demonstrating return on investment and impact of the role. Several institutional exemplars are included to highlight the process of identifying practice-specific quality metrics to quantify APRN role impact.

4EXHIBIT 1.2 Considerations for Identifying APRN Outcome Metrics

What are outcomes valued by the organization/institution? |

What are outcomes valued by the practice? |

Has there been an APRN-led initiative that could result in comparison of outcomes? |

Is there an opportunity to implement an APRN-led project that could result in comparison of outcomes? |

Has there been a new practice guideline implemented by the APRN team that could result in comparison of outcomes? |

Is there an opportunity to implement a new practice guideline that could result in comparison of outcomes? |

What electronic data capture or records are available? |

How can data reports be generated and provided to the APRN team? |

Consider identifying metrics as positions are developed/formed |

Aim to capture metrics that reflect APRN role activities |

Garner information systems support for data abstraction and ongoing reporting |

APRN, advanced practice registered nurse.

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, VANDERBILT UNIVERSITY MEDICAL CENTER

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, VANDERBILT UNIVERSITY MEDICAL CENTER

Vanderbilt University Medical Center (VUMC) is a comprehensive cancer, transplant, burn, and children’s center and level 1 trauma center, with over 1,100 patient care beds. There are 850 APRNs and physician assistants (PAs) throughout the inpatient and outpatient environment, spanning the adult and pediatric population. With increasing demand for outcomes demonstrating quality of care, VUMC has developed several dashboards to align with and demonstrate effort toward strategic goals. Some of these goals include decreasing nosocomial infections, decreasing wait times for new appointments, decreasing patient harm index, decreasing mortality rates, improving Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) scores, reducing unexpected hospital readmissions, improving patient experience, and facilitating ideal LOS. These metrics are reflective of a team approach with administrators, physicians, APRNs, nurses, and many others directly or indirectly involved in patient care. There are a few metrics that are tracked specifically to APRNs, such as process performance indicators and resource utilization. An example of a performance indicator is that of ordering blood transfusions, using an APRN-specific dashboard (Exhibit 1.3). This dashboard indicates the APRN’s name, area of practice, number of blood transfusions ordered per month, and number of transfusions ordered within the organizational blood transfusion protocol.

5EXHIBIT 1.3 NP/PA Blood Transfusion Dashboard: Total Number of Blood Transfusions per Month and Percentage Within Transfusion Protocol

NP, nurse practitioner; PA, physician assistant.

Quality metrics that are impacted by APRNs are discussed and included in the OPPE process. The OPPE process is implemented through the use of a secure, online data collection tool, providing opportunity for peer, physician leader, and APRN leader competency feedback (Figure 1.1). The competencies included are specific to the role, organization, and practice site. Role-specific competencies may include: professionalism, interpersonal communication, leadership, medical/clinical knowledge, and other competencies specific to advanced practice. Organizational competencies may include: chart review of documentation, controlled substance ordering practices, team effort toward organizational goals, and other indicators of measurable goals. Practice-specific competencies might include: knowledge and application of practice-specific protocols, procedural competencies, quality of provider-to-provider communication, peer feedback, and documentation quality.

Outcome measures of success are reviewed not only during OPPE; these measures are followed closely to demonstrate downstream return on investment in APRN practice. Often new practices are implemented on a pilot basis to compare specific APRN-associated metrics before and after adding APRNs to the practice. Given that the success of the team is reflective of quality and sustainability, APRNs are actively involved in the process of identifying the metrics for achievement of outcomes and regular review of outcomes to determine whether improvement measures are necessary. Overall, outcome measurement has been instrumental in the success of the VUMC advanced practice program, allowing for growth and continued demonstration of organizational value.

6

FIGURE 1.1 Online data collection tool for APRN OPPE.

APRN, advanced practice registered nurse; BB CABG, beta blockers after coronary artery bypass graft surgery; BG, blood glucose; CAUTI, catheter-associated urinary tract infection; CLABSI, central-line-associated bloodstream infection; DC, discharge; EMR, electronic medical record; NP, nurse practitioner; O/E LOS, observed/expected length of stay; OPPE, Ongoing Professional Practice Evaluation; PA, physician assistant; SCIP, surgical care improvement project; VUH, Vanderbilt University Hospital; WIZ/HEO, order entry system at Vanderbilt.

Source: Vanderbilt University Medical Center.

7 DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, HOSPITAL OF THE UNIVERSITY OF PENNSYLVANIA

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, HOSPITAL OF THE UNIVERSITY OF PENNSYLVANIA

At the Hospital of the University of Pennsylvania (Penn), there has been a long history of measuring the contributions of APRNs. The organization is a large urban academic medical center and regional referral center with a large and growing advanced practice provider (APP) workforce. APPs have been a part of the history at Penn since 1978, and there are well over 1,000 APPs across the health system. Since that time, APPs have been successfully integrated into virtually every clinical service within the hospital and ambulatory settings and are contributing positively to patient care in meaningful and substantive ways.

Historically, much of the early work to assess APP impact had been done in the surgical critical care unit where APPs were integrated into the intensivist-led interprofessional teams since 2002. At that time, a database was developed and designed with use of a Microsoft Access database management system that allowed the tracking of demographic variables including patient identification number, date of birth, and age. Additionally, admission and discharge dates to the intensive care unit (ICU) were documented, along with treatment-specific variables such as ventilator days, ICU diagnosis, complications such as venous thromboembolism (VTE), sepsis, hospital-acquired infections, and gastrointestinal bleed (GIB) to name a few. The database was used to track utilization of resources (labs, x-rays) and compliance with evidence-based clinical practice guidelines (CPGs). Through some of this early work around the integration of APPs in ICUs, differences were observed in compliance with evidence-based CPGs in a surgical ICU population (Gracias et al., 2008), complications and LOS in a trauma patient population (Scaff et al., 2004), nursing satisfaction (Haut et al., 2006), readmission mortality (Martin et al., 2015), and resource utilization (Haut et al., 2004). With data generated from the database and the strong support of clinical and executive leadership at the organization, measurement and reporting could be done to demonstrate the impact and outcomes of team-based care in the surgical ICU.

More recently, the APPs at Penn are focusing on provider-specific metrics to illustrate impact and articulate contribution to patients and our health care organization. This has been more challenging than evaluating team-based outcomes, since there is no clear way to establish attribution between a patient and an APP at the organization. Patients are admitted under an attending physician and the discharging physician on record is used to attribute an admission to a physician. It was not clear, however, if the discharging APP was the appropriate model to use for attribution. In partnership with quality leaders and data analysts at the organization, the advanced practice committee tested several hypotheses including discharging provider, types of orders, number of orders, admitting APP to understand and validate a patient, and APP attribution. Based on the work done by this team, it was found that in a team-based model, when an individual APP wrote more than 25% of the orders for a patient, the attribution of that patient could be made for that individual APP. In the end, the goal of this project was to develop quality metric reports (e.g., provider-specific report cards) similar to those issued to attending physicians with a focus on OPPE quality metrics such as general indicators (LOS, mortality, readmissions), safety indicators (unexpected deaths, central-line-associated bloodstream infection [CLABSI]), and perfect care measures (deep vein thrombosis [DVT] prophylaxis; Table 1.1).

APPs are able to generate value in all settings of care. At the organization, the future of measuring the quality impact of APPs will be focused around this value proposition, and aligning the work with organizational goals. Certainly, that includes team-based metrics such as mortality, LOS, hospital complications, and reduction in unnecessary variations of care. However, it also includes things such as access to care, care coordination across settings and time, throughput and efficiency, maximizing revenue, and managing total cost of care. Based on the institutional experience of introducing APPs onto care teams, outcomes and efficiencies are better. An ongoing focus is needed on developing and testing models of care to create the workforce of the future; one that meets the needs of the profession, patients, and organizations. This focus is needed to ensure the continued ability to identify the impact of APRN care on access, availability, cost, outcomes, and quality.

8TABLE 1.1 Example of a Provider-Specific Report Card to Showcase APP Outcomes

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, CAROLINAS HEALTHCARE SYSTEM

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, CAROLINAS HEALTHCARE SYSTEM

Carolinas HealthCare System (CHS) is a large health care system that serves patients on a complete care continuum. With more than 40 hospitals and 900 care locations, the opportunities for APRNs and PAs (APPs) are tremendous. Nearly 1,700 APPs are an integral part of the health care delivery system of CHS across seven care divisions and service lines.

9Given the size of CHS and the diversity of services delivered by APPs, creating a process for measuring value and quality is a complex process. In 2016, the Medical Group Division created an APP committee to help steer APP practice with developing a system to evaluate value created by APPs as one of its initial projects. It was decided that a formal APP dashboard would be created at first to assess the current state of APPs to begin evaluating their contribution to the organization.

A subcommittee was created that involved APPs, information technology, physicians, quality personnel, and the chief nursing informatics officer. It was decided to focus on the following seven categories: demographics, education, research, productivity, quality, engagement, and patient satisfaction. While this information was reported previously in multiple databases, the goal was to create a single dashboard that could show these metrics for the overall APP workforce, but also be subdivided by care division and service line.

Demographics is the first area of metrics to be added to the dashboard. It is to include routine demographic data such as age, sex, years of experience as an APP, years of service to CHS, and ethnic background. An additional data point will include type of board certification of each provider. Demographic data is critical for evaluating diversity and succession planning needs as the APP workforce grows.

Education of the APP (master’s degree, doctorate of nursing practice [DNP], or PhD) serves as the second group of metrics to be evaluated. As the nursing workforce seeks to double its number of doctorally prepared nurses, the organization wants to track this as well. Additionally, the dashboard will measure the number of APP student preceptor hours and potentially the number of lectures provided to enhance medical education by the APPs.

Research is the third element added to the APP dashboard. A metric to assess the number of research studies in which an APP serves as either a principal investigator or coinvestigator will be evaluated. National, state, and local conference presentations as well as publications will also be tracked.

Productivity is the fourth element to be considered in the APP dashboard, and perhaps is the most difficult given the variety of practice locations of the CHS APPs. The committee assessed that there would have to be separate productivity assessments for the ambulatory and inpatient care teams. In the ambulatory setting, productivity can be tracked somewhat easily given that many APPs are responsible for their own patient panels.

In the inpatient setting, however, it is much more difficult to assess, given that most of the care is carried out in teams leading to shared billing, which is many times attributed to a physician partner rather than the APP. The committee looked to the electronic medical record (EMR) to assess productivity within the inpatient setting. If an APP is the primary author of a history and physical, progress note, discharge summary, or procedure note, then an internal attribution of productivity is based on these types of notation in the EMR. The procedure note assessment is also very helpful in terms of reappointment for medical staff privileges.

Quality assessment also was a metric that diverged based on care setting. In the ambulatory setting, quality metrics were assigned to the individual APP by care division and service line and are the same metrics measured for physician colleagues. In the inpatient setting, quality metrics are to be assessed in the initial phase similar to how metrics are being assessed for physician providers; for example, to include LOS, discharge order by 10 a.m., and adherence to the diabetes mellitus order set.

10Engagement is the fifth metric included in the APP dashboard, including the engagement scores of the APPs overall, and the engagement scores of each of the care divisions and service lines. This in combination with turnover rates of each of the individual areas of practice will help the APP committee target areas of concern for APPs to prevent unnecessary turnover. In addition to APP engagement, the committee evaluates physician satisfaction scores of those who work with APPs versus those who do not have APP teammates.

Patient satisfaction is the last metric to be added to the dashboard. Individual patient satisfaction scores are to be attributed to the APP in the ambulatory setting, but again are difficult to assess in the inpatient setting given the team-based approach. The patient satisfaction scores is a new section to be added to the inpatient surveys to include whether an APP was involved in a patient’s care. While patient satisfaction will not be directly attributed to an individual APP, at a minimum, feedback related to satisfaction with the APP team will be acquired.

By creating a comprehensive dashboard that spans the entire care continuum in which APPs provide care, the organization can assess its advanced practice workforce in detail. The value of APPs to the organization is much broader than revenue and this dashboard works to show all aspects of their contribution. Finally, the dashboard serves as a data pool to aid in strategic planning and workforce development of this vital component of the overall provider workforce.

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, MEMORIAL SLOAN KETTERING CANCER CENTER

DEVELOPING QUALITY METRICS: INSTITUTIONAL EXEMPLAR, MEMORIAL SLOAN KETTERING CANCER CENTER

Memorial Sloan Kettering Cancer Center (MSKCC) is a 471-bed oncologic specialty hospital with more than 450 APPs. The critical care APPs, which consist of 41.7 full-time equivalents (FTEs), staff a 20-bed mixed medical–surgical ICU, a 35-bed postanesthesia care unit (PACU), and lead the hospital’s rapid response and daytime code team. In total, there are 30 NPs and six PAs, in addition to five NP specialists—who manage education, scheduling, and practice—and one program manager, who manages the hospital-wide sepsis program. The critical care APP program is managed by an NP coordinator.

In the critical care unit at MSKCC, separating APP quality has been, like in many other institutions, historically challenging to measure. The APPs work in conjunction with ICU intensivists; however, their role as rapid response team (RRT) leaders is mainly autonomous and necessitated the exploration of novel quality metrics.

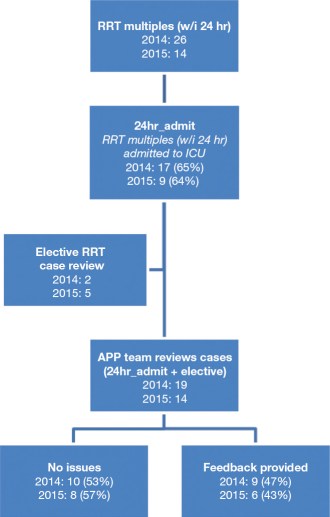

In 2014, the critical care APP group analyzed hospital-wide RRT data, focusing on RRT multiples. These multiples, defined by subsequent call(s) within 24 hours of one another, were further broken down into those where the latter RRT call resulted in an ICU admission and classified as “24hr_admit” (see Figure 1.2).

The data, tracked by the provider, resulted as a percentage: the number of multiple calls divided by total calls within a month. Subsequently, the 24hr_admit multiples were reviewed during monthly staff meetings as case presentations followed by open-ended discussion and assessment regarding appropriateness of care (24hr_admit_reviewed; see Figure 1.3).

11

FIGURE 1.2 APP RRT metric methodology.

APP, advanced practice provider; ICU, intensive care unit; RRT, rapid response team; w/i, within.

FIGURE 1.3 Individual case review feedback. This figure illustrates monthly staff-meeting casereview recommendations. The most common issues identified were insufficient documentation and poor clinical assessment, both of which decreased by 50% the subsequent year. In 2014, 47% of the cases had quality of care issues requiring feedback compared to 43% in 2015.

GOC, goals of care.

12TABLE 1.2 APP RRT Metric Before (24hr_admit) and After Reviewed (24hr_admit_reviewed)

Data were further processed by removing the number of cases where appropriate care was rendered. All feedback was provided to the clinicians regardless of their presence during staff-meeting review. Cases were also reviewed that were internally flagged, either because of unexpected outcomes or internal staff suggestions. The process of case review was found to be extremely valuable to the staff. In total, 19 cases were reviewed in 2014 and 14 cases in 2015 (see Table 1.2).

LESSONS LEARNED

LESSONS LEARNED

In the first 2 years of tracking and reviewing APP-specific quality metrics, there was a noted increase in elective case review. This was thought to occur because the team 13anecdotally found the review process to be both educational and appealing, consequently gaining confidence in case presentation and discussion.

It was also recognized that the RRT flagged cases (24hr_admit) decreased from 19 to 14, which may have happened as a result of the process; however, the actual process of review is crucial to the development and quality of care rendered. Therefore, it has been proposed to increase the RRT ICU admit window to 36 hours instead of 24 hours to capture more reviews.

It is important to understand that the initial phase of APP metric utilization should not focus on improving quality of care, although initially a small decrease in quality of care issues was seen (5%; 47%–43%), but rather creating a seamless process. A structured APP metric/improvement method will steer improved APP quality patient care.

In addition, it was helpful to provide a review template, since many of the APPs are new to providing an objective quality analysis. By implementing a template and providing a quick orientation, review presentations have improved and become more efficient in achieving unbiased scholarly discussion.

PRACTICE-SPECIFIC QUALITY METRICS

PRACTICE-SPECIFIC QUALITY METRICS

As described in the exemplars, measuring outcomes of APRN practice involves identifying and choosing metrics to be monitored, along with forming a process to track and monitor the metrics. Identifying practice-specific metrics is a strategy that can be used to define impact of the APRN role. Exhibit 1.4 outlines examples of metrics comparing traditional, quality and safety, and role-specific metrics.

Choosing Wisely®

Choosing Wisely®

An emerging opportunity to showcase the impact of the APRN role is through implementing the Choosing Wisely recommendations. The American Board of Internal Medicine Foundation established the Choosing Wisely campaign in 2012 to promote the use of judicious testing, as well as decrease unnecessary treatment measures such as avoiding antibiotic overuse (www.choosingwisely.org/about-us). The Choosing Wisely campaign identifies tests, procedures, and treatments commonly used but whose necessity should be questioned. More than 70 specialty organizations have identified recommendations to improve decision making and promote appropriate patient-centered care. Each society has developed a list of five to 10 tests, treatments, or services that are commonly overused (Cassel & Guest, 2012). Choosing Wisely initiatives have included best practice campaigns, quality improvement projects, and formal research studies within the United States and globally.

EXHIBIT 1.4 Examples of Metrics Used to Highlight Impact of APRN Role

Traditional Metrics: Length of stay Readmission rates Quality/Safety Metrics: Catheter-associated urinary tract infection rates Pressure ulcer incidence Postsurgical glycemic control Patient satisfaction rates Role-Specific Metrics: APRN-led CHF clinic: rates of patient follow-up; ED and hospital readmission rates APRN 24/7 ICU service: blood transfusion rates; chest-x-ray use; daily lab use; unit discharges by noon APRN unit-specific staff nurse engagement scores Patient-reported symptoms with APRN cardiac surgery follow-up |

APRN, advanced practice registered nurse; CHF, congestive heart failure; ED, emergency department; ICU, intensive care unit.

14An APRN-led initiative was launched in 2015 at VUMC working in conjunction with an interdisciplinary Choosing Wisely committee. A multidisciplinary daily lab reduction initiative that was completed at the institution served as a guide for implementing the APRN Choosing Wisely project (Iams et al., 2016). For a 12-month period, lab and chest-x-ray use were tracked in six ICUs and in several specialty units to assess the impact of APRN-led unit-based projects. Data were tracked on lab and x-ray use and reviewed at monthly taskforce meetings. An interdisciplinary committee including medical, nursing, quality, lab services, and data analyst support reviewed key metrics on a monthly basis in order to refine data collection and review ongoing project results. Overall, the APRN-led initiative resulted in increased clinician awareness and better ordering practices with a decrease in unnecessary testing and care measures (Kleinpell et al., in press).

As a result of the initiative’s success, the Vanderbilt Advanced Practice Nursing Collaborative was launched to enable APRN teams at other institutions to implement a Choosing Wisely initiative, track outcomes, and disseminate results. Participating teams will identify up to three lab or diagnostic test measures to target reduction in unnecessary ordering, or another Choosing Wisely recommendation. An online registration process, Research Electronic Data Capture (REDCap), is used for teams to register to participate and to gain access to the collaborative materials (www.mc.vanderbilt.edu/aprnchoosingwisely). These materials include sample flyers, sample slide deck, examples of data tracking and displays, and lessons learned by Vanderbilt APRN teams. For more information, visit the collaborative website (www.mc.vanderbilt.edu/aprnchoosingwisely).

SUMMARY

SUMMARY

Health care restructuring continues to change the way in which care is delivered, and as APRNs’ roles expand, the measurement of outcomes is an important parameter by which APRN care can be evaluated. Moreover, as APRNs are involved in providing care to a variety of patient groups and in various settings, they are often the most familiar with the clinical problems that need to be studied and are therefore the ideal practitioners to participate in the development of outcome-based initiatives (Resnick, 2006). Knowledge of the process of outcome measurement and of available resources is essential for all APRNs regardless of practice specialty or setting. The use of practice-specific quality metrics and initiatives such as implementation of the Choosing Wisely recommendations can 15be used to define impact of the APRN role. The additional chapters in this book outline further examples of practice-based outcome assessments related to APRN practice, including indicators monitored and how outcome assessments were conducted, as well as sources for identifying outcome-related tools.